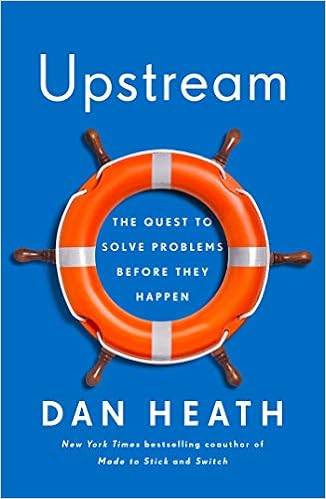

Dan Heath has just published a new book, Upstream: The Quest to Solve Problems Before They Happen.

I just got the book, and am reading it now. I think there is going to be a lot of good material to discuss here.

But this post is about a marketing email with an excerpt really resonated with me, and I want to discuss that. I wrote to Dan Heath, and got his permission to use pieces of the excerpt here. (Thank you, Dan)

Management By Measurement = “Ghost Victories”

I have talked about what I call “management by measurement” in the past. In that post I told a true story of a company that placed very heavy emphasis on reducing inventory levels without digging into how that performance was achieved. The net result was a an embarrassed CEO during a quarterly analyst’s call. Not good.

Dan Heath talks about the same thing in Upstream. He calls it “ghost victories.”

[when] there is a separation between (a) the way we’re measuring success and (b) the actual results we want to see in the world, we run the risk of a “ghost victory”: a superficial success that cloaks failure.

The most destructive form of ghost victory is when your measures become the mission. Because, in those situations, it’s possible to ace your measures while undermining your mission.

He goes on to describe a case in the U.K. where the Department of Health established penalties for wait times longer than four hours in the Emergency Departments. And it worked. Wait times were reduced – at least on paper. Then the facts began to emerge:

In some hospitals, patients had been left in ambulances parked outside the hospital—up until the point when the staffers believed they could be seen within the prescribed four-hour window. Then they wheeled the patients inside.

In the old post I referenced above, I said:

If making the numbers (or the sky) look good is all that matters, the numbers will look good. As my friend Skip puts it so well, this can be done in one of three ways:

- Distort the numbers.

- Distort the process.

- Change the process (to deliver better results).

The third option is a lot harder than the other two. But it is the only one that works in the long haul.

All of this ties very well to Billy Taylor’s keynote at KataCon6 where he talked about the difference between “Key Activities” and “Key Indicators.” It is only when we can get down to the observable actions and understand the cause-and-effect relationship between those actions and the needle we are trying to move that we will have any effect.

To avoid the “Ghost Victory” trap, Dan recommends “pre-gaming” your metrics and thinking of all of the ways it would be possible to hit the numbers while simultaneously damaging the organization. In other words, get ahead of the problem and solve it before it happens.

He proposes three tests which force us to apply different assumptions to our thinking.

The lazy bureaucrat test

Imagine the easiest possible way to hit the numbers – with the least amount of change to the status quo. The story I cited above about inventory levels is a great example.

I can make my defect rates improve by altering the definition of “defect.”

There are lots of accounting games that can be played.

This is one to borrow from Skip’s list – How can the underlying numbers be distorted to make this one look good when it really isn’t?

The “rising tides” test

What external factors would have a significant impact on this metric? For example, I was working at a large company where a significant part of their product cost was a commodity raw material. As the market price went down, “costs” went down, and bonuses all around. But when the market price went up, “costs” went up and careers were threatened and bad reviews issued.

Those shifts in commodity prices had nothing to do with how those managers were doing their jobs, the tide rose and fell, and their fortunes with it.

The question in my mind is “What things would make this number look good, or bad, without any effort or change in the process we are trying to measure?”

The defiling-the-mission test

Hmmm. This is a tough one. (not really)

And it is a really common problem in our world of quarterly and annual expectations. In what ways could meeting these numbers in the short term ultimately hurt our reputation, our business, in the long term?

For example, I can think of an ongoing story of a product development project that hit its cost and schedule milestones (what was being measured). But they did so at the cost of destroying their reputation with customers, their Federal regulators and the public (and, to a large extent, their employees). They now have a new CEO, but the deeper problem has origins in the late 1990s.

How long will it take them to recover? That story is still playing out.

In another case I was in a meeting with a team that was discussing a customer complaint. The ultimate cause was a decision to substitute a cheaper material to reduce production costs. But this is a premium brand. There was a great question asked there: “Which of our values did we violate here?” – so the introspection was awesome.

Next step? Ask that question before the decision is made: “Is this decision consistent with our values?” If that makes you uncomfortable, then time to look in the mirror.

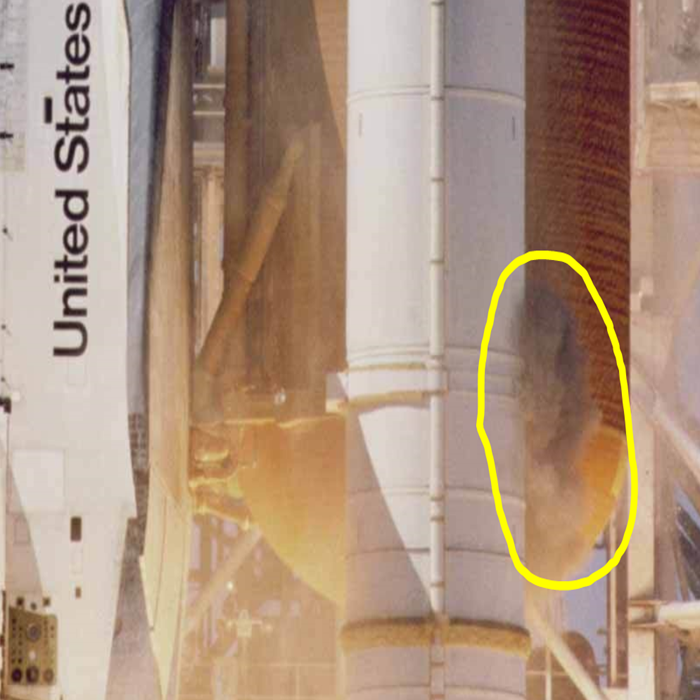

Then there is this little incident from 2010:

The metric was cost and schedule. Which makes sense. But the behavior that was driven was cutting corners on safety.

Getting Ahead of Problems

The book’s subtitle says it is about getting ahead of problems. I am looking forward to reading it and writing something more comprehensive.

There are lots of classes and materials out there about “active listening” but I really like a simple techniques that

There are lots of classes and materials out there about “active listening” but I really like a simple techniques that  NO sarcasm. NO implied judgement. You must come from a position of being curious about what they are trying to communicate, and what they are feeling.

NO sarcasm. NO implied judgement. You must come from a position of being curious about what they are trying to communicate, and what they are feeling.