Credit: A lot of stuff comes across my screen, sometimes more than once. I have seen this illustration several times, but don’t remember where it originally came from. If you created it, PLEASE comment so I can give appropriate credit.

Improvement is messy. Science is messy. Research and Development is messy. These are all cases where we want an outcome, are pretty sure what that outcome looks like, but there isn’t really a clear path to get there.

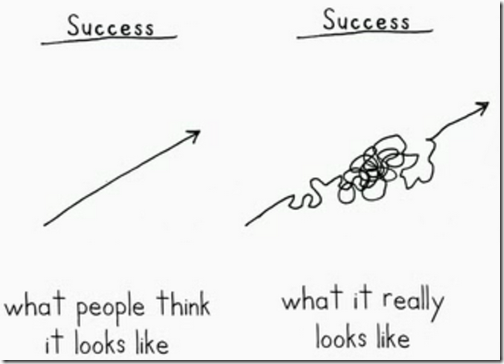

This illustration has illustrates two very different mindsets.

On the left is how many people believe the improvement / organizational development / learning process works. We set out a phased project plan to transform the organization. We carry out a set of pre-defined steps, and when they are completed, we are at the goal.

Sadly our education process is largely constructed on this model. Take the classes, pass the tests, the student is educated.

This thinking is appropriate when the outcome is something we have done before, like constructing a house. Even if this house is a bit different, the builders have rich experience in carrying out all of the constituent process steps. Further upstream, the designer carefully followed building and construction codes. In those cases, we are applying a rich body of experience (or the documented experiences of others) to reach the end goal.

These tasks can be scheduled and sequenced in a project plan. The steps are known, the result is known.

On the right is the hairball of learning and discovery.

We know where we are starting.

We know where we want to end up.

We are reasonably certain we can get from Point A to Point B. But we don’t know precisely which detailed steps or experiments will give us the results we need.

We don’t know for sure that the next result will be a forgone conclusion from the next action step.

Each step gives us more information about the next step.

In the world of continuous improvement, we live in an interesting paradox.

We often believe we are operating in the set-piece model on the left. We see a situation, we apply tools to duplicate a solution we have seen work in a similar situation before.

An interesting thing happens at this point. The Real World intervenes, and we discover that the situation was actually more like the right side. We thought we knew the right answers. We might have even been mostly right. But at the detail level, things don’t work quite as we predicted.

Now… if the system has been deliberately set up to flag those anomalies, and we are recording them and being curious to discover what we didn’t understand then we are in learning mode.

But all too often the experts who guided the original installation have moved on, leaving the people executing the process behind. The assumption was “It works, we can go to the next problem.”

The people in the process did what they were told, or guided, to do. We might have even asked for their input on the details. But that process rarely leaves them with additional skills they need to deal with anomalies in ways that make the process more robust.

So they do what they must to get things done. Mostly that means adding some inventory here, some additional checks or process steps there. Over time, the process erodes back to something close to its original state.

Why? Because we thought we knew everything… but were wrong. And didn’t notice.

This model applies to any effort to shift how an organization performs and interacts. We outrun our headlights and take on the Big Change because that company “over there” does it, and we just copy the end result.

What we missed was doing the work.

More about this later.

Mark,

Sage advise, but, of course, you are painting with a broad stroke here as this applies to all of human experience and interaction.

However, it is always good to realize that no matter how hard we plan and try we will still have to walk the full path (do the work). If our Western society was not so set on instant pudding, TV on demand, fast food, and instant success, perhaps we might plan for the long haul instead of the next news cycle or quarterly earnings report.

We have trained ourselves to demand instant success, which is a recipe for constant failure.